Why Most Banks Will Miss the AI Moment and How a Few Will Redefine It

Over the past decade, banks across the GCC have invested heavily in digital transformation. Mobile adoption is high, onboarding has improved, and customer data is more accessible than ever.

Yet a more fundamental issue persists: experiences remain inconsistent, fragmented, and difficult to scale.

A retail banking customer in the UAE can receive a pre-approved credit offer through a mobile app, only to be asked for the same documents again when visiting a branch. In KSA, a wealth client may interact with a relationship manager who lacks visibility into recent digital activity, leading to generic advice rather than contextual recommendations.

At the same time, regulatory expectations are evolving. Authorities across the region are placing increasing emphasis on:

- Transparency in automated decisioning

- Fair treatment in lending and advisory

- Traceability of customer interactions

This creates a structural tension. Banks must deliver more personalized, real-time experiences, while ensuring that every decision and interaction can be explained, audited, and justified.

AI appears to resolve this tension. In practice, it often amplifies it.

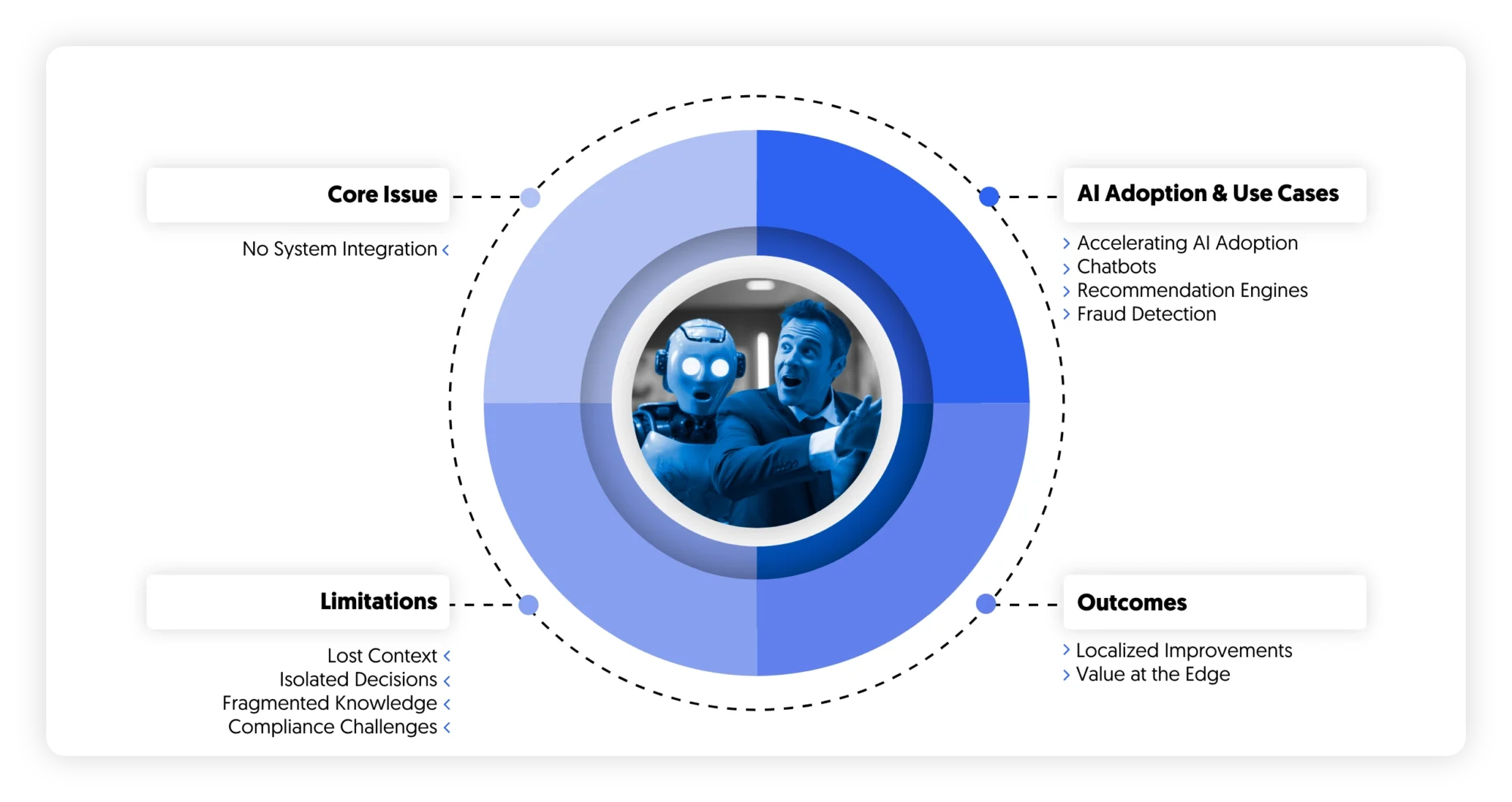

The illusion of progress: why pilots fail to transform experience

Across banks in the UAE and KSA, investment in advanced analytics, automation, and decisioning capabilities has moved quickly from experimentation to early-scale deployment. Most large institutions now operate a portfolio of use cases across servicing, sales, and risk. Chat interfaces handle routine inquiries, recommendation engines drive cross-sell in digital channels, and fraud models are embedded into onboarding journeys.

Individually, these initiatives often perform well. Contact center volumes decline modestly as simple interactions are deflected. Campaign response rates improve when targeting becomes more precise. Fraud detection becomes faster and more accurate at specific checkpoints. These gains are real and, in many cases, measurable within months of deployment.

What is less visible is their limited impact on the overall experience.

Customer journeys remain fragmented. Operational inefficiencies persist. In many cases, the introduction of new capabilities adds complexity rather than reducing it. The result is a growing gap between perceived progress—often reflected in internal reporting—and the lived reality of customers and frontline teams.

This gap is largely structural. Most deployments are designed to optimize discrete touchpoints rather than reconfigure end-to-end journeys. They sit alongside existing processes, systems, and data flows, which were not originally designed to support continuous, context-aware interactions. As a result, improvements do not extend beyond the boundaries of the specific use case.

A recurring issue is the lack of continuity across channels. Customer interactions are still tied to the system in which they originate, whether mobile, web, or contact center. When a journey spans multiple touchpoints, context is only partially transferred, if at all. Customers are required to restate information, and agents must rebuild understanding from fragmented records. This not only affects experience quality but also increases handling time and operational cost.

Decisioning follows a similar pattern. While many banks have introduced next-best-action capabilities, these are often triggered at predefined moments rather than operating continuously. They rely on snapshots of customer data instead of dynamically evolving context. As a result, recommendations can be locally optimized but globally inconsistent. A customer may receive an offer that aligns with past behavior but ignores recent signals or broader financial circumstances, creating both experience and compliance concerns.

Knowledge fragmentation further compounds the problem. Product information, policy rules, and regulatory requirements are typically distributed across multiple repositories, each with its own update cycles and ownership. Frontline teams develop informal ways to navigate this complexity, but automated systems struggle to do so reliably. Without a unified and governed knowledge layer, consistency in responses remains difficult to achieve, particularly in regulated interactions.

These dynamics are evident in operational settings. In one regional bank, a conversational interface successfully absorbed a significant share of routine servicing requests. However, escalation processes were not redesigned in parallel. When interactions moved to human agents, prior context was not transferred in a structured way. Agents restarted conversations, increasing handle times and offsetting efficiency gains achieved upstream. The technology improved a specific interaction, but the overall journey remained disjointed.

In another case, a Saudi institution deployed a next-best-offer engine focused on credit card acquisition. Conversion rates improved within targeted segments, but the decisioning logic operated with limited integration into broader affordability and risk frameworks. This led to internal challenges from risk and compliance teams, slowing further rollout and reducing confidence in scaling the approach.

Across the market, similar patterns emerge. Value is created within individual use cases but does not accumulate across the system. Gains in one area are often diluted by inefficiencies in another. Over time, this leads to diminishing returns on additional deployments.

The implication is that the limiting factor is no longer the performance of individual models or tools. It is the absence of an integrated experience architecture. Without shared data foundations, continuous decisioning, and consistent knowledge governance, new capabilities remain constrained within their original scope.

For banks seeking meaningful impact, the priority shifts from adding more use cases to connecting existing ones. The focus moves toward designing journeys as coherent systems, where context persists, decisions evolve, and interactions reinforce one another over time.

Until this shift takes place, progress will continue to be visible in isolated metrics, but difficult to translate into sustained improvements in customer value, operational efficiency, or risk outcomes.

What leading banks are doing differently

A small but growing group of institutions in the region is beginning to break away from the pattern of isolated deployments. Their distinguishing characteristic is not the sophistication of individual use cases, but the way those use cases are connected and embedded into broader experience flows.

These banks are treating experience management as a system with interdependent components rather than a collection of optimizations. This shift changes where value is created. Instead of focusing on improving performance at specific touchpoints, they focus on how interactions accumulate across the lifecycle of a customer relationship.

One area where this becomes visible is onboarding.

In many banks, onboarding is still designed as a fixed sequence of steps, with limited flexibility once the process begins. Leading institutions are rethinking this structure. Rather than enforcing a predefined flow, they treat onboarding as a dynamic process that adapts to customer behavior and risk signals in real time.

In practice, this means that signals such as hesitation, inactivity, or repeated errors are interpreted as indicators of friction rather than failure. Customers exhibiting these patterns are redirected toward assisted channels before they drop off entirely. At the same time, process steps are no longer rigid. Document verification, identity checks, and data capture can be reordered based on the likelihood of completion and the customer’s profile.

Risk assessment is integrated into this flow rather than applied as a separate control layer. Customers with lower risk profiles can move through fewer steps, while higher-risk cases trigger additional checks without disrupting the overall experience. The outcome is not only faster onboarding, but a more efficient allocation of operational and compliance effort.

A similar shift is taking place in contact centers.

Instead of viewing automation as a means to deflect volume, leading banks are focusing on how to improve the effectiveness of human interactions. The contact center becomes a point of decision support rather than simply a channel for issue resolution.

Real-time access to customer context plays a central role. Agents are no longer required to navigate multiple systems to reconstruct a customer’s history. Instead, relevant information is synthesized into a coherent view at the moment of interaction. This includes recent activity, prior conversations, and current products held.

More importantly, guidance is embedded directly into the interaction. Recommended responses are aligned with internal policies, and potential compliance risks are surfaced as the conversation unfolds. This reduces reliance on post-call quality assurance and shifts control upstream, into the interaction itself.

The impact is not limited to efficiency metrics such as handle time. It affects the consistency and defensibility of decisions made in real time. By integrating policy and risk considerations into the flow of the conversation, these banks reduce the likelihood of errors that would otherwise be identified after the fact.

In wealth management, the shift is most apparent in how knowledge is accessed and applied.

Advisors traditionally rely on a combination of static documents, internal portals, and personal experience to provide guidance. This creates variability in both the quality and consistency of advice, particularly as products and regulations evolve.

Leading institutions are addressing this by restructuring knowledge as a governed, queryable layer. Instead of navigating documents, advisors can access specific, context-relevant information at the point of need. Responses are tied to clearly identified sources, allowing both the advisor and the institution to understand the basis for any recommendation.

This has two effects. It improves the quality of client interactions by reducing uncertainty and search time. At the same time, it strengthens alignment with regulatory expectations by ensuring that advice is grounded in up-to-date and approved information.

Across these examples, the common thread is not the use of advanced tools in isolation. It is the integration of decisioning, interaction, and knowledge into a single flow.

Leading banks are redesigning processes so that context is preserved, decisions evolve continuously, and control mechanisms are embedded within the experience rather than applied after it. As a result, improvements in one part of the journey reinforce improvements in others.

This is what allows value to scale.

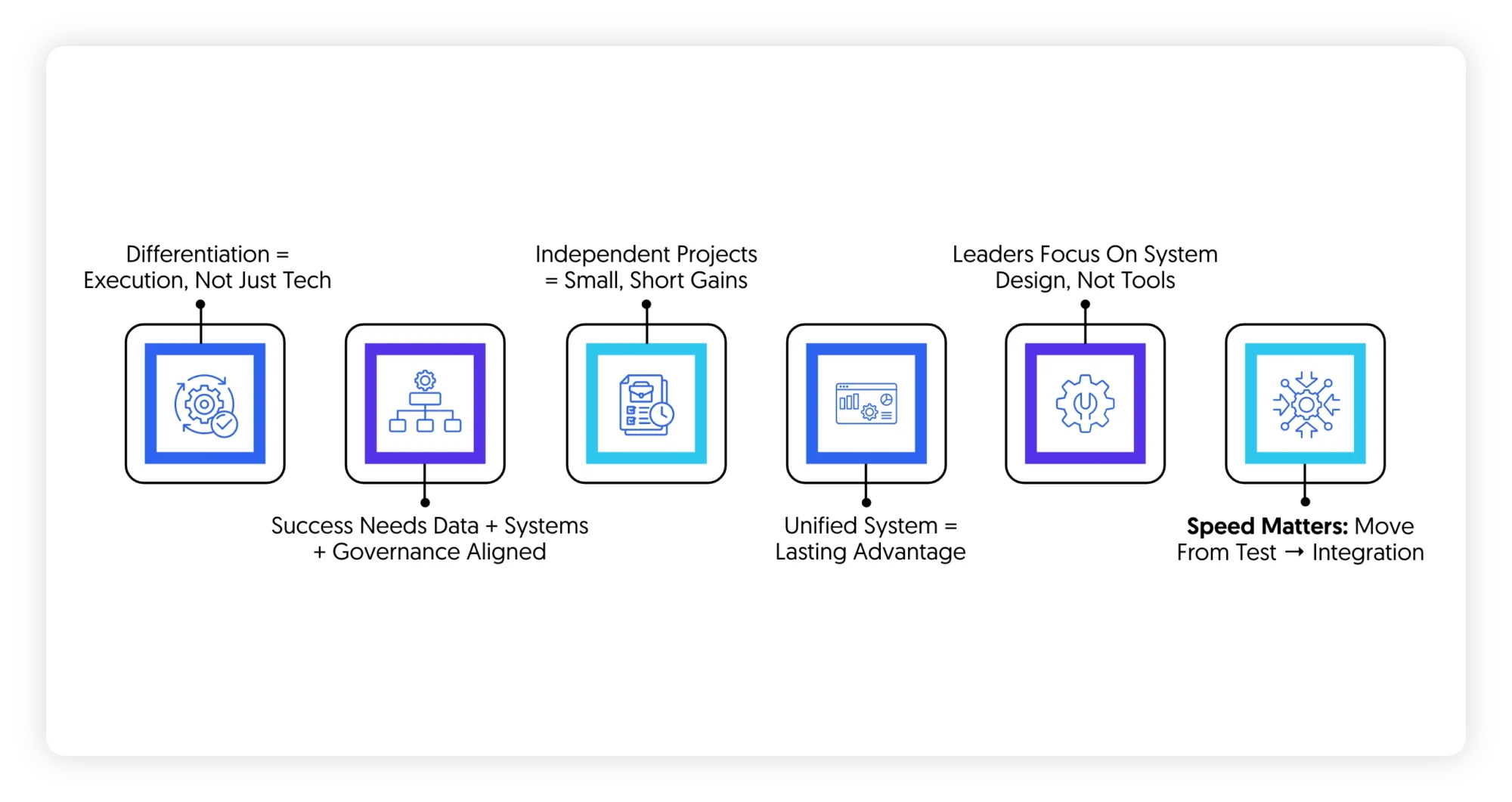

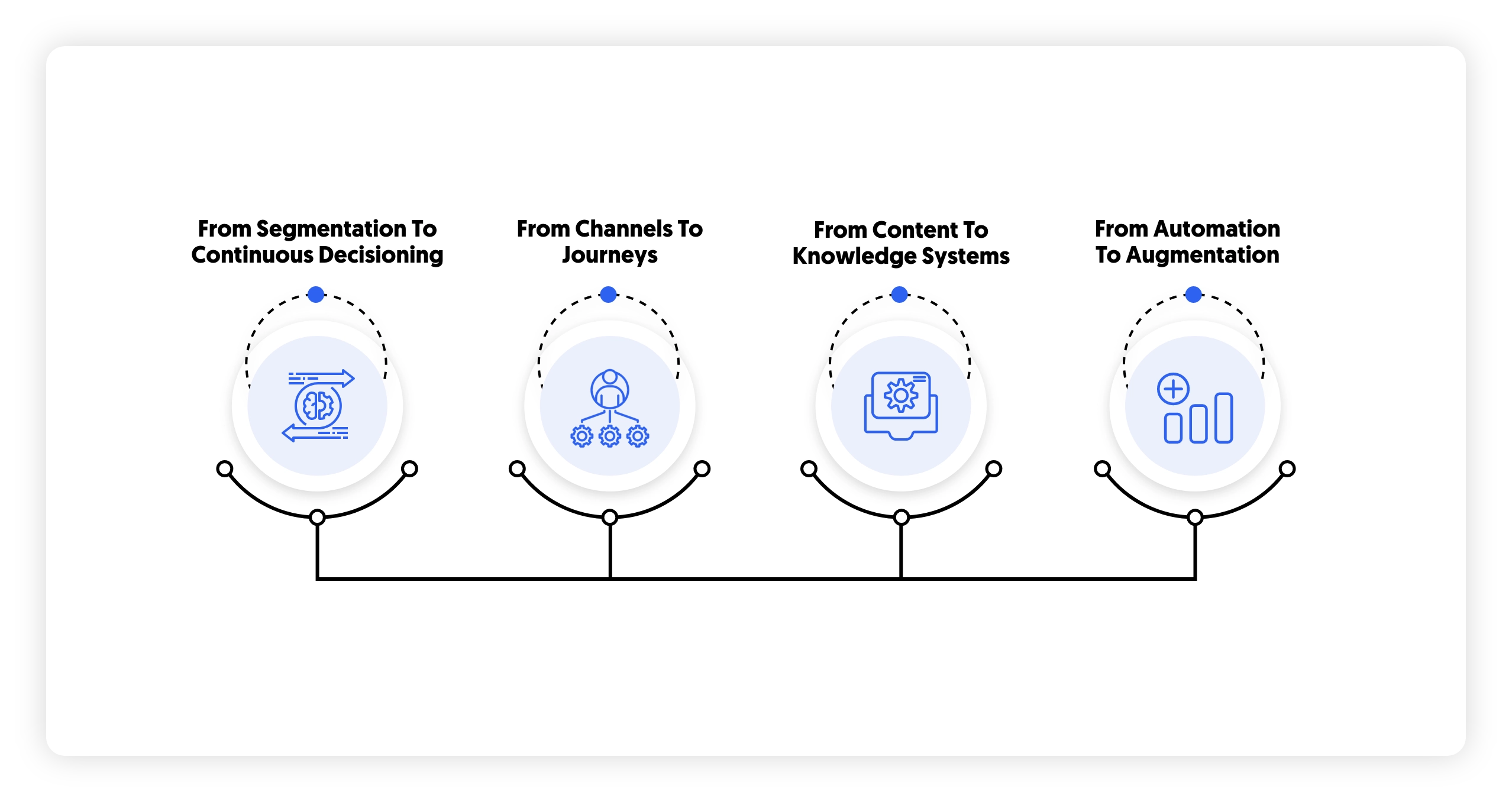

The shifts redefining experience management

What separates leading institutions is not the adoption of new technologies, but a set of structural shifts in how experience is designed, delivered, and governed. These shifts are subtle at first glance, but they fundamentally change how value is created and sustained.

The first shift concerns how decisions are made.

Most banks still rely on segmentation as the backbone of personalization. Customers are grouped into categories based on historical data, and predefined rules determine which offers or actions apply to each group. While this approach has been refined over time, it remains inherently static. It assumes that customer behavior is stable and that decisioning can be anchored to periodic updates.

Leading institutions are moving toward a different model, where decisioning is continuous rather than episodic. Customer context is updated in real time, incorporating recent interactions, behavioral signals, and changes in financial position. Decisions are recalibrated as this context evolves, rather than triggered at fixed points.

This has important implications. Personalization becomes less about targeting and more about timing and relevance. At the same time, risk considerations are integrated directly into decision flows. Instead of being applied as a separate validation step, they shape the decision itself. This reduces the likelihood of misaligned offers and creates a closer link between commercial outcomes and risk discipline.

The second shift relates to how experiences are structured.

Historically, banks have optimized performance at the level of individual channels. Digital teams focus on mobile and web journeys, contact center teams focus on call handling, and branch networks operate with their own processes and metrics. While coordination exists, it is often limited and reactive.

Leading banks are redefining the unit of optimization. The focus moves from channels to journeys that span multiple touchpoints over time. These journeys are analyzed as sequences of interactions, where the outcome depends on how well each step connects to the next.

This perspective reveals patterns that are not visible at the channel level. Points of friction, such as repeated data entry or inconsistent messaging, become easier to identify. More importantly, it enables targeted interventions. Instead of waiting for a failure to occur, banks can anticipate where customers are likely to disengage and act before that point is reached.

Achieving this requires more than analytics. It depends on the ability to maintain a consistent view of the customer across systems and to coordinate actions across channels with minimal delay. Without this foundation, journey-level optimization remains theoretical.

The third shift addresses how knowledge is managed.

In most institutions, knowledge is distributed across documents, systems, and teams. Product details, policy rules, and regulatory guidance are maintained separately, often with different update cycles and ownership structures. This fragmentation introduces variability into both human and automated interactions.

Leading banks are consolidating this into governed knowledge systems that can be accessed in a structured and consistent way. Information is no longer retrieved as documents, but as precise answers linked to specific sources. Versioning becomes explicit, allowing institutions to track which policies or guidelines were in effect at the time of an interaction.

This approach changes how accuracy and compliance are ensured. Rather than relying on individuals to interpret and apply information correctly, the system itself enforces consistency. It also creates a foundation for scaling interactions, as both customers and employees can rely on the same underlying knowledge layer.

The fourth shift concerns the role of automation in the workforce.

Early efforts focused on replacing human effort in routine tasks. While this remains relevant, it has become clear that the more significant opportunity lies in improving how human decisions are made.

Leading institutions are therefore prioritizing augmentation over substitution. The objective is to reduce the cognitive load on frontline teams by providing timely, context-specific support during interactions. This includes synthesizing customer information, suggesting next steps, and highlighting potential risks.

The impact extends beyond efficiency. By embedding guidance directly into workflows, banks improve the consistency and defensibility of decisions made by their employees. This is particularly important in regulated environments, where deviations from policy can have significant consequences.

Taken together, these shifts point to a broader change in operating model.

Experience management becomes a coordinated system in which data, decisioning, interaction, and knowledge are tightly integrated. Improvements are no longer confined to individual touchpoints but propagate across journeys. Control mechanisms are embedded within processes rather than applied after the fact.

This is what allows leading banks to move beyond incremental gains and achieve sustained impact at scale.

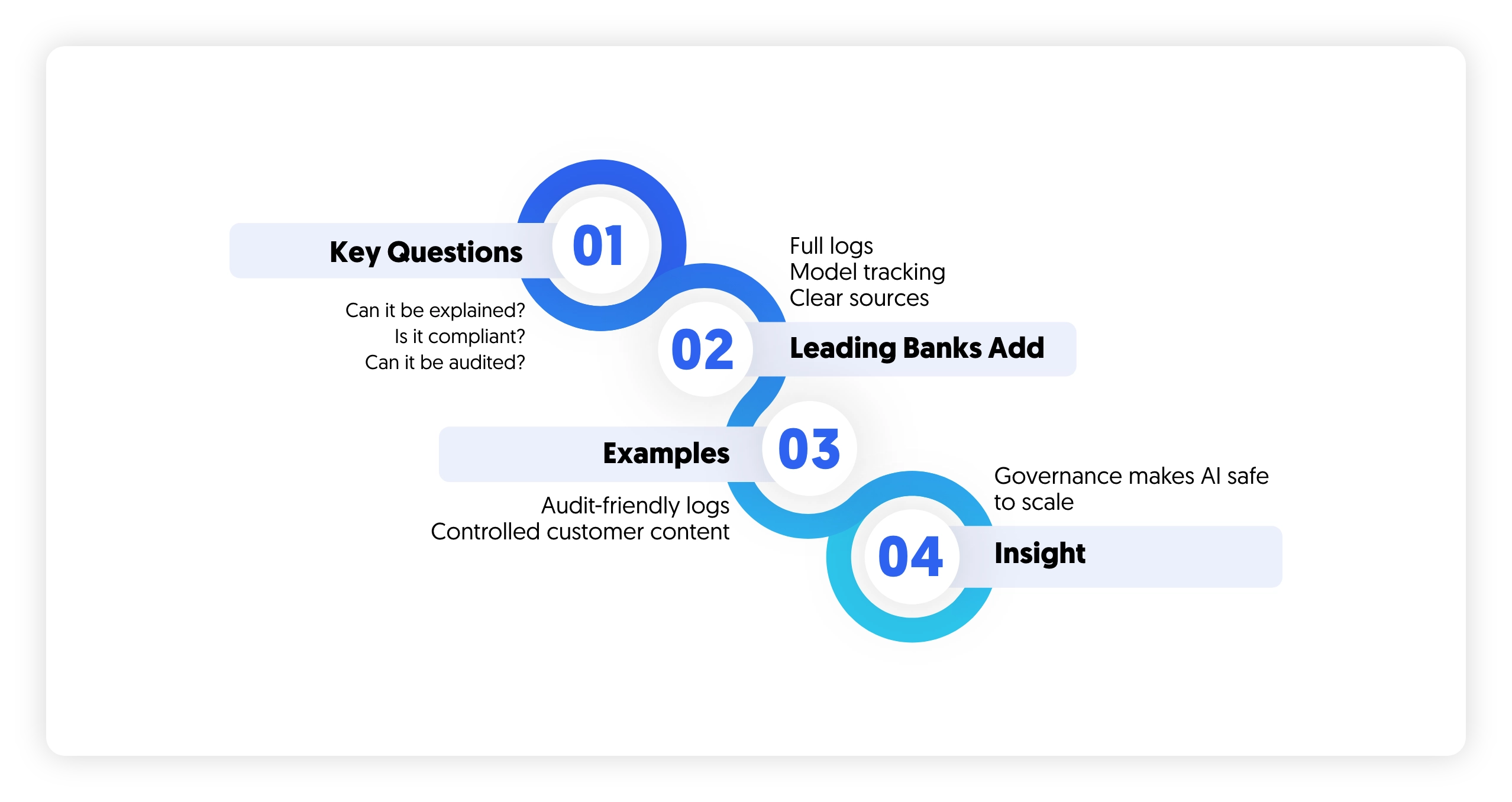

Trust as infrastructure: why governance determines scale

In financial services, scaling advanced decisioning and automation is less a question of technical capability than of institutional trust. The constraint is not whether systems can generate recommendations or responses, but whether those outputs can be relied upon in environments where accountability, traceability, and fairness are non-negotiable.

Every automated or semi-automated interaction introduces a set of implicit challenges. Decisions must be explainable to internal stakeholders and, increasingly, to customers. Outcomes must align with regulatory expectations that continue to evolve across jurisdictions. Interactions must be reconstructable in the event of disputes, audits, or supervisory reviews. Without credible answers to these requirements, even high-performing systems struggle to move beyond controlled deployments.

What distinguishes leading institutions is how they address these constraints. Rather than treating governance as an overlay, they incorporate it directly into the architecture of experience delivery.

A core element is the creation of comprehensive interaction records. Instead of logging only final outcomes, these institutions capture the full chain of events that led to a decision or response. This includes inputs, intermediate steps, and the specific logic or model state applied at the time. The result is a level of traceability that supports both internal oversight and external scrutiny.

Closely linked to this is the discipline of version control. Decisioning systems and content generation mechanisms evolve continuously, but without clear versioning, it becomes difficult to understand why a particular outcome occurred. Leading banks maintain explicit links between outputs and the exact configuration—models, rules, and knowledge sources—that produced them. This allows issues to be diagnosed precisely and reduces ambiguity during audits.

Knowledge attribution plays a similarly important role. When responses are generated from internal policies or regulatory documents, the ability to identify the underlying source is critical. It provides assurance that interactions are grounded in approved material and allows institutions to demonstrate consistency in how information is applied. Over time, this also builds confidence among frontline teams, who can see not just what the system recommends, but why.

Monitoring extends these controls into ongoing operations. Rather than relying on periodic reviews, leading institutions implement continuous oversight of decision quality, fairness, and alignment with policy. This is particularly relevant in areas such as lending and advisory, where unintended bias or drift can emerge as data and behavior change. Early detection allows corrective action before issues escalate into regulatory concerns.

Examples from the region illustrate how these principles are applied in practice.

One GCC bank introduced immutable interaction records across its conversational interfaces. Each customer interaction could be traced back to the precise configuration of the system at the time it occurred, including the underlying knowledge sources. This significantly reduced the effort required for compliance reviews and improved the bank’s ability to respond to regulatory inquiries with confidence and speed.

Another institution focused on tightening control over outbound communications. Automated responses were constrained within a governed framework that ensured required disclosures were consistently included and that language adhered to pre-approved standards. This reduced variability in customer communications and limited the risk of non-compliant messaging, particularly in high-volume channels such as chat and messaging platforms.

These approaches reflect a broader shift in how governance is perceived. It is no longer viewed as a constraint on innovation, but as an enabling layer. By creating clear rules, visibility, and accountability, governance allows institutions to deploy advanced capabilities with greater confidence. It reduces the need for manual oversight, shortens approval cycles, and supports more rapid iteration within defined boundaries.

As a result, the ability to scale is directly linked to the strength of this infrastructure. Institutions that invest in embedded governance are better positioned to extend capabilities across channels and use cases. Those that do not remain limited to narrow applications, where risks can be contained but value remains constrained.

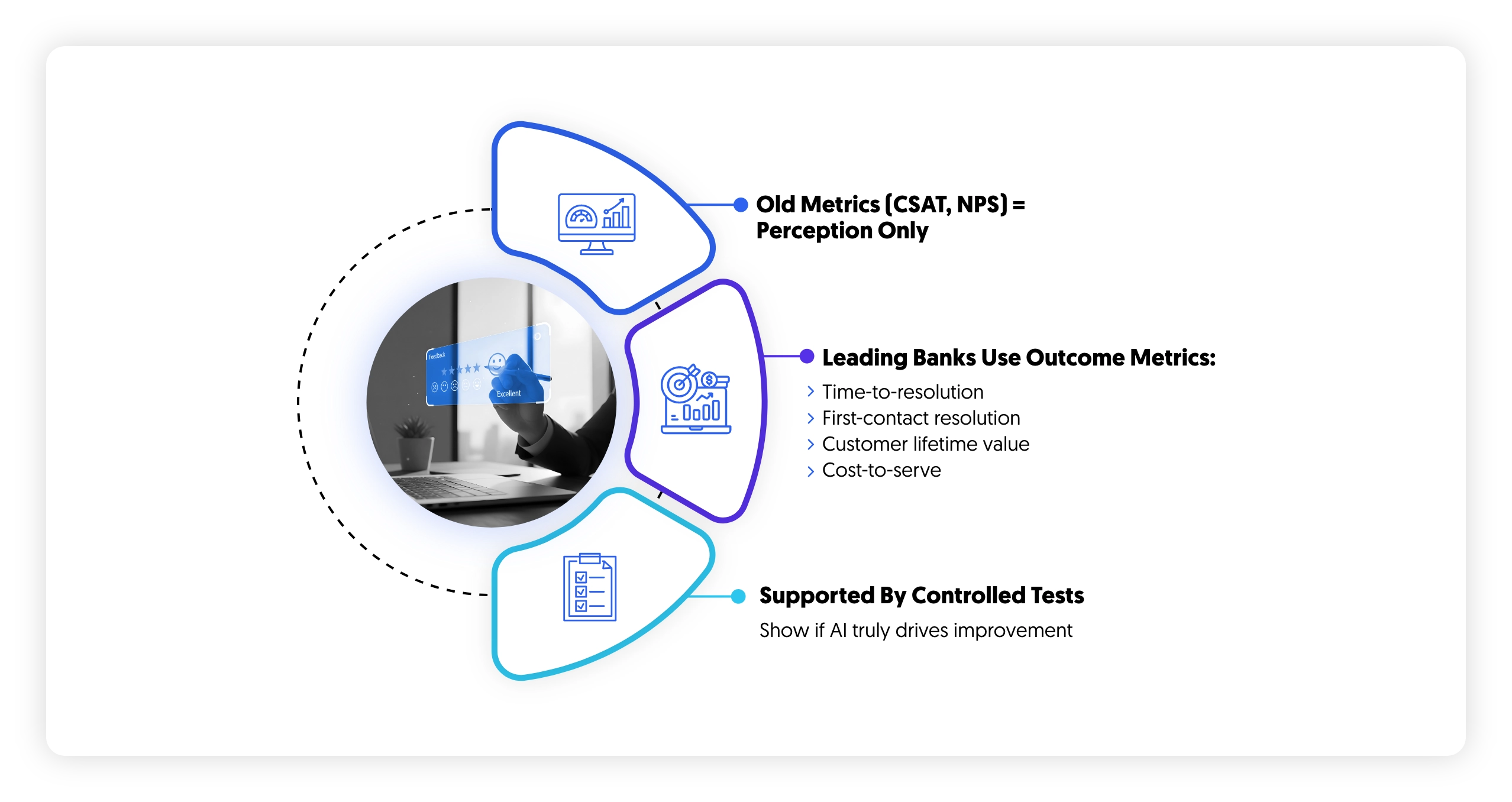

Measuring what matters: from satisfaction to value

A further point of divergence among leading banks lies in how impact is defined and measured. Measurement is often treated as a downstream activity, used to validate initiatives after deployment. In practice, it shapes what gets scaled, what gets funded, and what is ultimately embedded into operating models.

Traditional experience metrics such as CSAT and NPS continue to be used across the industry. They provide useful directional signals on customer perception and sentiment. However, they are limited in what they reveal about underlying performance. High satisfaction scores can coexist with inefficient operations, fragmented journeys, or weak commercial outcomes. They describe how customers feel, not how the system performs.

Leading institutions are therefore shifting toward metrics that directly connect experience to operational and financial outcomes. The emphasis moves from perception to value creation across the lifecycle.

Time-to-resolution becomes a primary indicator of how efficiently the organization is able to resolve customer needs across channels and functions. It reflects not only frontline performance but also the degree of integration between systems, as delays often originate from fragmented access to information or unclear ownership across processes.

First-contact resolution provides a complementary view of effectiveness. It captures whether issues are resolved within a single interaction, which in turn depends on the quality of context available to agents, the maturity of decisioning support, and the consistency of underlying knowledge. Improvements in this metric typically indicate deeper structural alignment rather than incremental operational gains.

Customer lifetime value introduces a longer-term lens. It shifts attention from individual transactions or campaigns to the cumulative impact of experience quality over time. In this context, personalization, retention interventions, and service consistency are evaluated based on their contribution to sustained relationship value rather than short-term conversion.

Cost-to-serve provides the operational counterbalance. As institutions introduce automation and augmentation, this metric captures whether efficiency gains are actually realized at scale. It also helps identify whether improvements in one part of the journey are offset by complexity elsewhere in the system.

What distinguishes more advanced organizations is not only the choice of metrics, but how those metrics are validated. Instead of relying on directional comparisons before and after implementation, they increasingly use structured experimentation to isolate causality. Controlled testing frameworks, including A/B and multivariate approaches, are applied to understand whether observed changes are directly attributable to specific interventions or influenced by external factors.

This discipline changes the nature of decision-making. It allows institutions to distinguish between correlation and impact, particularly in environments where multiple initiatives are running in parallel. As a result, scaling decisions are based on evidence of causal improvement rather than assumed contribution.

The implications are significant. Experience management becomes measurable in economic terms, not only experiential ones. Institutions are able to link changes in journey design, interaction quality, and decisioning logic to quantifiable shifts in value creation. This creates a more disciplined foundation for prioritization, investment, and governance of experience transformation efforts.

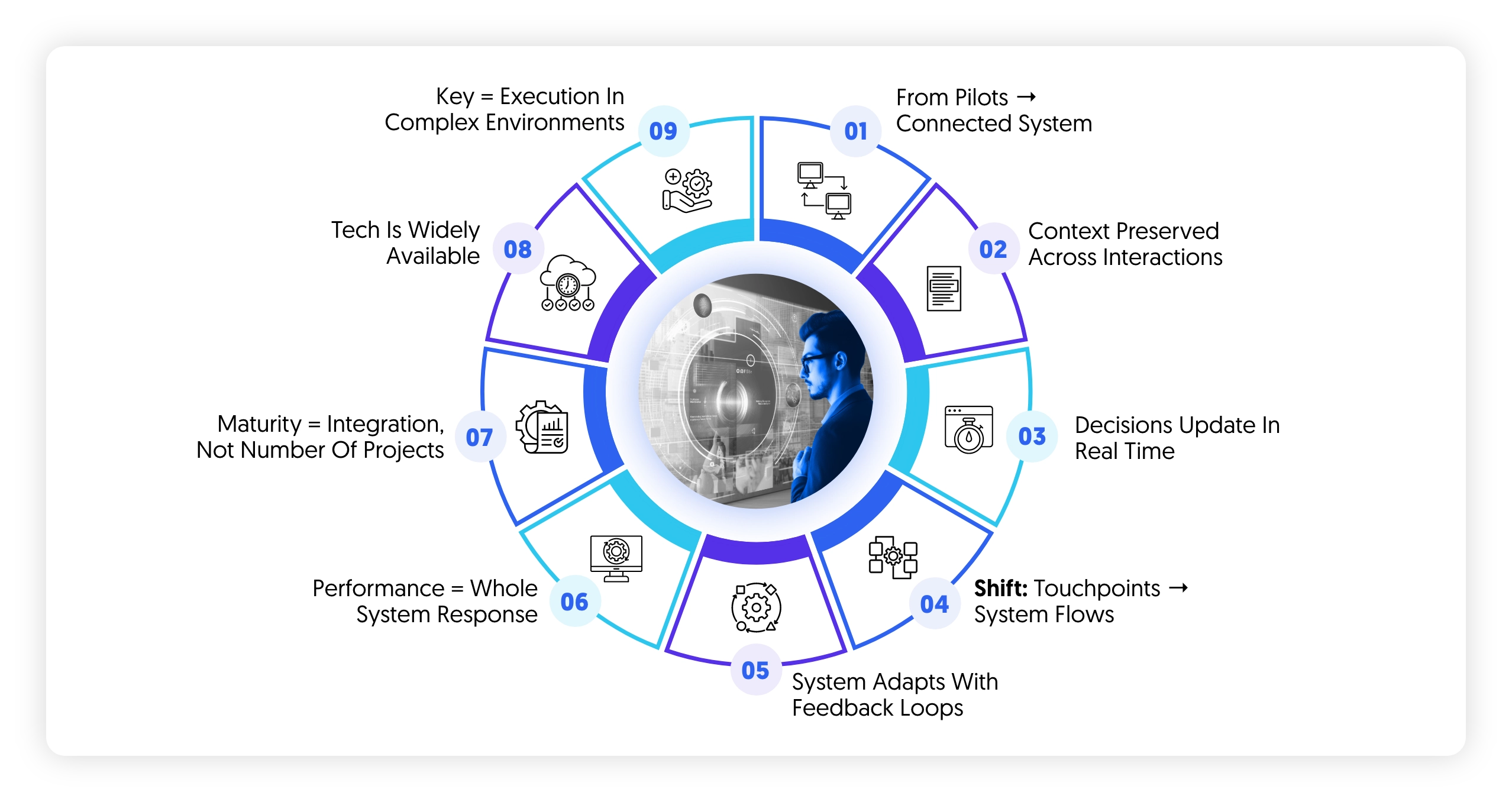

The path forward: from pilots to systems

The transition toward AI-enabled experience management in banking rarely succeeds as a large-scale, top-down transformation. In practice, it begins in a far more constrained and pragmatic way, shaped by operational pressure, data readiness, and the need to demonstrate value early.

Leading institutions typically concentrate their initial efforts in areas where complexity is high but outcomes are visible. Contact centers and onboarding journeys are common starting points because they concentrate a large volume of interactions, expose structural inefficiencies, and offer clear performance baselines. These environments also allow for controlled experimentation, where improvements in resolution speed, conversion, or effort reduction can be directly measured.

The selection of use cases in these early phases is less about technological ambition and more about strategic clarity. The focus is on identifying processes where fragmentation is most visible and where integration of intelligence into the flow of work can produce immediate operational and customer impact.

However, what differentiates institutions that progress beyond pilots is not the success of these initial deployments, but what happens after them.

The critical shift is toward integration across previously isolated capabilities. Decisioning engines begin to interact with orchestration layers, allowing real-time signals to influence journey flows rather than remaining confined to static rules or campaign logic. Knowledge systems are standardized across channels, reducing variability in how information is accessed and applied by both customers and employees. Governance mechanisms are embedded directly into deployment pipelines, ensuring that controls, approvals, and auditability are not external checkpoints but part of the system itself.

This integration changes the nature of experience management. It moves from a set of independently optimized use cases to a connected operating model. Context is preserved across interactions, decisions evolve continuously based on updated signals, and interventions can be triggered dynamically across channels and stages of the journey.

Over time, this leads to a structural shift. Experience management becomes less about managing individual touchpoints and more about managing a system of interdependent flows. Performance is no longer assessed in isolation at the level of a channel or campaign, but as an outcome of how effectively the system as a whole responds to customer needs.

In more advanced implementations, this system begins to exhibit adaptive behavior. Feedback loops from outcomes, customer responses, and operational signals are used to refine decisioning logic, improve orchestration rules, and update knowledge structures. This creates a continuous cycle in which the experience system evolves based on observed performance rather than periodic redesign.

The implication is that maturity is not defined by the number of use cases deployed, but by the degree of integration across these layers.

At the same time, the conditions that enable this shift are becoming more widely available. The underlying technologies supporting real-time decisioning, language-based interaction, and knowledge retrieval are no longer limited to a small number of frontier institutions. This reduces the barrier to entry and increases the pace at which capabilities can be replicated across the market.

As a result, differentiation is moving away from access to technology and toward the ability to operationalize it within complex, regulated environments. Execution now depends on whether institutions can align data, systems, governance, and operating models into a coherent structure that supports scale.

Institutions that continue to treat AI as a collection of independent initiatives are likely to see localized improvements, particularly in efficiency and conversion. However, these gains tend to plateau when systems remain disconnected.

Those that reframe the challenge around system design rather than tool deployment are positioned differently. By integrating decisioning, interaction, orchestration, and governance into a unified model, they are able to convert incremental improvements into sustained structural advantage.

The direction of travel is already clear across leading markets. The remaining variable is the speed at which institutions are able to move from experimentation to integration, before the gap between system-level leaders and pilot-level adopters becomes difficult to close.